Obviously the consequences of blocking URLs by mistake can have a huge impact on visibility in the search results.

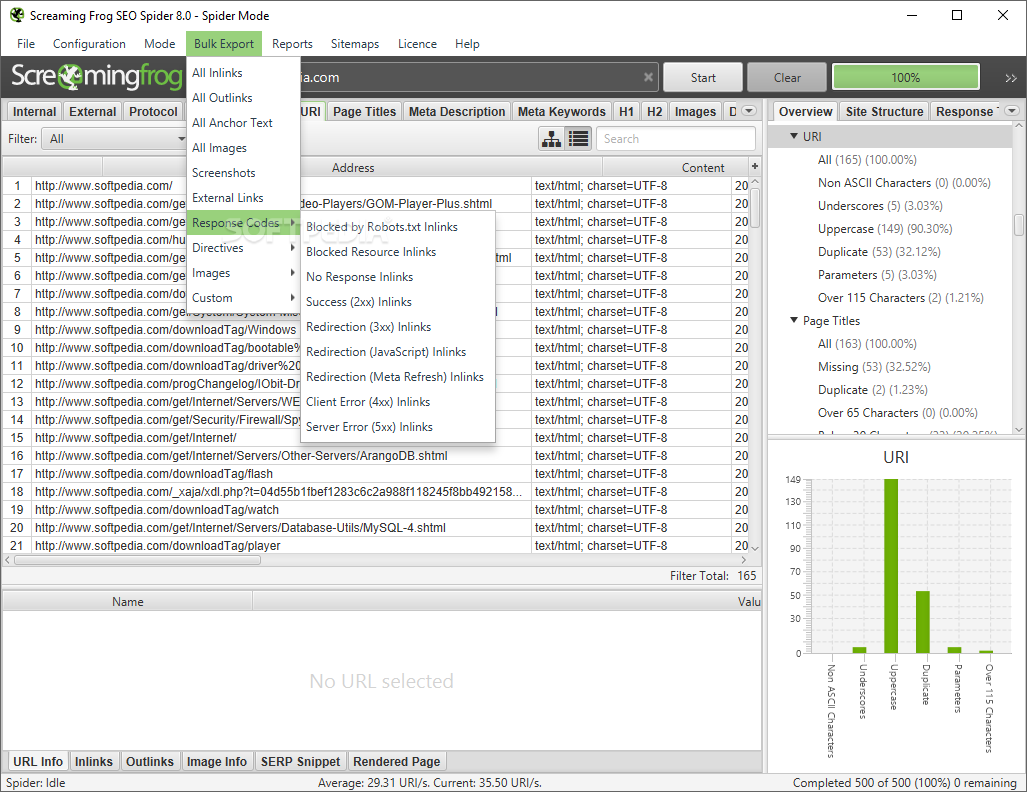

While robots.txt files are generally fairly simple to interpret, when there’s lots of lines, user-agents, directives and thousands of pages, it can be difficult to identify which URLs are blocked, and those that are allowed to be crawled. You can view a sites robots.txt in a browser, by simply adding /robots.txt to the end of the subdomain (for example). All major search engine bots conform to the robots exclusion standard, and will read and obey the instructions of the robots.txt file, before fetching any other URLs from the website.Ĭommands can be set up to apply to specific robots according to their user-agent (such as ‘Googlebot’), and the most common directive used within a robots.txt is a ‘disallow’, which tells the robot not to access a URL path. How To Test A Robots.txt Using The SEO SpiderĪ robots.txt file is used to issue instructions to robots on what URLs can be crawled on a website.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed